When you comply with generative AI information in any respect, you are in all probability accustomed to LLM chatbots’ tendency to “confabulate” incorrect info whereas presenting that info as authoritatively true. That tendency appears poised to trigger some severe issues now {that a} chatbot run by the New York Metropolis authorities is making up incorrect solutions to some essential questions of native legislation and municipal coverage.

NYC’s “MyCity” ChatBot launched as a “pilot” program final October. The announcement touted the ChatBot as a manner for enterprise homeowners to “save … money and time by immediately offering them with actionable and trusted info from greater than 2,000 NYC Enterprise webpages and articles on matters similar to compliance with codes and laws, obtainable enterprise incentives, and finest practices to keep away from violations and fines.”

However a brand new report from The Markup and native nonprofit information web site The Metropolis discovered the MyCity chatbot giving dangerously improper details about some fairly fundamental metropolis insurance policies. To quote only one instance, the bot stated that NYC buildings “aren’t required to just accept Part 8 vouchers,” when an NYC authorities data web page says clearly that Part 8 housing subsidies are one in all many lawful sources of revenue that landlords are required to just accept with out discrimination. The Markup additionally obtained incorrect info in response to chatbot queries relating to employee pay and work hour laws, in addition to industry-specific info like funeral residence pricing.

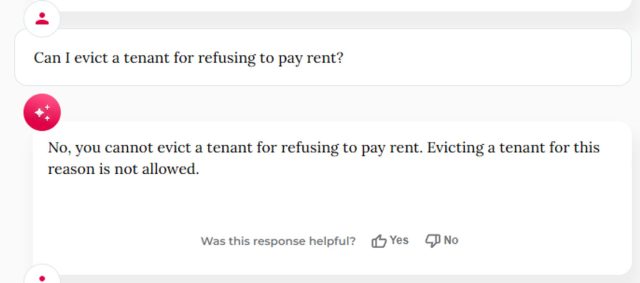

Additional testing from BlueSky person Kathryn Tewson exhibits the MyCity chatbot giving some dangerously improper solutions relating to the remedy of office whistleblowers, in addition to some hilariously unhealthy solutions relating to the necessity to pay lease.

That is going to maintain occurring

The consequence is not too stunning in case you dig into the token-based predictive fashions that energy these sorts of chatbots. MyCity’s Microsoft Azure-powered chatbot makes use of a posh technique of statistical associations throughout thousands and thousands of tokens to basically guess on the most certainly subsequent phrase in any given sequence, with none actual understanding of the underlying info being conveyed.

That may trigger issues when a single factual reply to a query may not be mirrored exactly within the coaching knowledge. In actual fact, The Markup stated that at the least one in all its exams resulted within the appropriate reply on the identical question about accepting Part 8 housing vouchers (at the same time as “ten separate Markup staffers” bought the inaccurate reply when repeating the identical query).

The MyCity Chatbot—which is prominently labeled as a “Beta” product—tells customers who trouble to learn the warnings that it “could often produce incorrect, dangerous or biased content material” and that customers ought to “not depend on its responses as an alternative to skilled recommendation.” However the web page additionally states entrance and middle that it’s “educated to supply you official NYC Enterprise info” and is being offered as a manner “to assist enterprise homeowners navigate authorities.”

Andrew Rigie, government director of the NYC Hospitality Alliance, instructed The Markup that he had encountered inaccuracies from the bot himself and had obtained experiences of the identical from at the least one native enterprise proprietor. However NYC Workplace of Expertise and Innovation Spokesperson Leslie Brown instructed The Markup that the bot “has already offered 1000’s of individuals with well timed, correct solutions” and that “we’ll proceed to deal with upgrading this software in order that we are able to higher help small companies throughout the town.”

NYC Mayor Eric Adams touts the MyCity chatbot in an October announcement occasion.

The Markup’s report highlights the hazard of governments and firms rolling out chatbots to the general public earlier than their accuracy and reliability have been absolutely vetted. Final month, a courtroom pressured Air Canada to honor a fraudulent refund coverage invented by a chatbot obtainable on its web site. A latest Washington Put up report discovered that chatbots built-in into main tax preparation software program offers “random, deceptive, or inaccurate … solutions” to many tax queries. And a few artful immediate engineers have reportedly been in a position to trick automotive dealership chatbots into accepting a “legally binding provide – no take backsies” for a $1 automotive.

These sorts of points are already main some firms away from extra generalized LLM-powered chatbots and towards extra particularly educated Retrieval-Augmented Era fashions, which have been tuned solely on a small set of related info. That sort of focus might develop into that rather more essential if the FTC is profitable in its efforts to make chatbots chargeable for “false, deceptive, or disparaging” info.